Establishing robust security standards for application development is integral in today’s digitally connected world. The Open Web Application Security Project (OWASP) has contributed significantly to this endeavor by creating the OWASP Top 10 list. This list is recognized globally as the standard awareness document. It outlines the most critical security risks plaguing web applications, aiming to educate developers, security professionals, and organizations about these vulnerabilities.

The OWASP Top 10 serves as a roadmap for web application developers, encapsulating the common vulnerabilities and the preventive measures. For security professionals, this list acts as a reference for conducting comprehensive security testing, allowing them to prioritize risks that call for immediate remediation. For organizations, it provides guidelines for defining secure development protocols, conducting thorough risk assessments, and implementing steadfast security control measures.

The evolving digital landscape, dominated by web applications, has brought APIs (Application Programming Interfaces) to the fore. APIs come with their own set of unique vulnerabilities. OWASP has developed a distinct list known as the ‘OWASP API Security Top 10‘ to address these. This list focuses on the most significant threats unique to the processing of API calls, providing necessary guidance for safe API development and integration.

The New Frontier in Software Development

As software development evolves, the integration of machine learning and Large Language Models (LLMs) is transforming applications. However, with this advancement comes mounting security concerns. In the fascinating landscape of LLMs, we encounter a unique intersection of human and programming languages in software development for the first time. The incredible potential this blend of languages unlocks comes with its novel security challenges.

Prompt Injection: A Pervasive Threat

Consider, for instance, ‘prompt injection’ – a rapidly emerging threat in the LLM realm. It involves manipulating LLM outputs using subtle, specialized instructions, potentially leading LLMs and their associated applications to run unauthorized code, resulting in severe security breaches.

Beyond Prompt Injection: Viewing Vulnerabilities Holistically

However, is prompt injection the only risk associated with LLMs? Deeper exploration reveals that prompt injection could be a catalyst, leading to other vulnerabilities. This inquiry raises essential questions. Do we need to address other connected risks while focusing solely on prompt injection? Is our attention to this single vulnerability hindering our view of the broader risk landscape?

Indeed, discussions centering around LLM vulnerabilities must include the interconnected nature of these risks. The security approach for LLMs should be broad-based, encompassing more than just a single aspect like prompt injection. This perspective urges us to look beyond isolated vulnerabilities and examine their interconnections, which could hold the insight to mitigate future LLM application security concerns.

The Need for Comprehensive AI System Assessment

As AI-based systems begin integrating into society, it becomes crucial to assess these systems holistically. Recognizing that an AI ecosystem extends beyond LLMs and models enables us to understand how to attack and defend such systems. We especially need to be mindful of where AI systems intersect with standard business systems, as these intersections can allow actions in the real world.

OWASP Top 10 for LLM Applications

In light of these insights, the OWASP Top 10 for LLM applications aims to address and provide solutions for these vulnerabilities beyond prompt injection. This much-needed resource equips us to navigate the captivating yet complex world of LLM security, paving the way for secure AI system integration into our society.

A snapshot of the top 10 vulnerabilities

The following is a summary of the vulnerabilities that make up the OWASP Top 10. Detailed prevention methods are available on their respective pages.

- LLM01: Prompt Injection – Prompt Injection vulnerability is when an attacker misleads an LLM with cunning inputs to unwittingly perform actions such as data theft, initiating unauthorized purchases, social engineering, or other problems.

- LLM02: Insecure Output Handling – This involves poor management and inadequate validation of outputs from LLMs, which can lead to successful exploits resulting in various vulnerabilities, from XSS and CSRF in web browsers to SSRF, privilege escalation, and remote code execution on backend systems.

- LLM03: Training Data Poisoning – Machine learning uses diverse training data and deep neural networks for output generation. However, data poisoning can disrupt model operations and accuracy, posing risks such as software exploitation and reputation damage.

- LLM04: Model Denial of Service – Similar to a DoS attack, an attacker can overly interact with an LLM, consuming excessive resources, degrading service quality, and potentially incurring high costs.

- LLM05: Supply Chain Vulnerabilities – Supply chain vulnerabilities in LLMs can impact training data and models, causing biases, breaches, and system failures, enhanced by risks from third-party inputs and LLM plugin extensions.

- LLM06: Sensitive Information Disclosure – LLM applications can inadvertently disclose sensitive data and information, necessitating user awareness for safe interaction and potential risks of unintentional sensitive data input.

- LLM07: Insecure Plugin Design – LLM plugins, which operate automatically and without validation, can be exploited through malicious requests, leading to issues like remote code execution, data exfiltration, and privilege escalation.

- LLM08: Excessive Agency – An LLM-based system’s ability to independently interface with other systems and make decisions can lead to excessive agency. This vulnerability causes damaging actions in response to unexpected outputs. This vulnerability, which results from extreme functionality, permissions, or autonomy, can impact confidentiality, integrity, and availability depending on the systems the LLM interacts with.

- LLM09: Over-reliance – Overreliance on erroneous LLM-generated information or source code can result in security breaches, misinformation, reputational damage, and significant operational safety and security risks.

- LLM10: Model Theft – Unauthorized access to LLM models by malicious actors can result in theft or compromise of these valuable assets, leading to significant financial loss, reputational damage, and unauthorized usage or exposure of sensitive information.

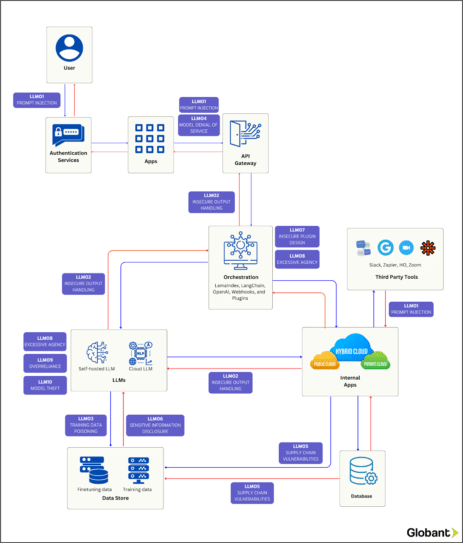

Mapping Vulnerabilities in Enterprise Application Architecture

At Globant, we’ve created the diagram below to map the vulnerabilities mentioned above onto a generic enterprise application architecture. This mapping should provide insight into potential attack vectors at various stages of the application’s process.

Tying it together

The original OWASP Top 10 heralded a groundbreaking evolution in web application security, a transformation equally matched by the introduction of the API Top 10. Such moments in history spurred the birth of unprecedented best practices, successfully creating a thriving market universe for security and observability tools.

Poised on the brink of another paradigm shift, the OWASP Top 10 for LLM applications promises a similar transformative sphere. Coupled with a robust framework of best practices to quell emergent issues, it forges a solid foundation. Given the increasing integration of Large Language Models in enterprise application development, we stand on exciting grounds, ready to spectate this invigorating shift.

At Globant, we are staunch advocates of stringent security measures and firmly adhere to a ‘shift left’ approach when building dependable systems for our clients. Armed with a vigilant eye on the evolving terrain of LLM security, we are committed to delving deeper and curating more insightful content in this intriguing domain in the forthcoming times.