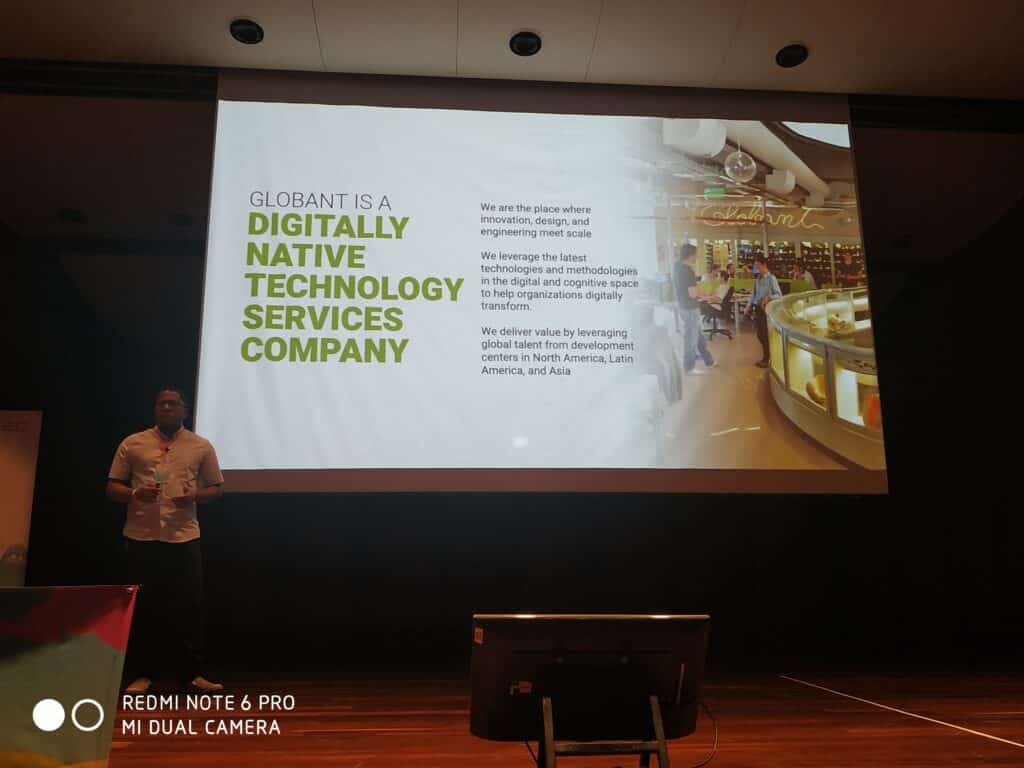

Globant participated in the 2020 Pycon in Medellín, Colombia this year. Three days dedicated to all lovers of Python programming language.

The event consisted of different talks and activities, and networking between developers, companies, and customers.

As part of Pycon2020, Globant presented 3 digital experiences at the stand, 3 workshops and a presentation about the company. Attendees could discover how Globant use Python and AI .

The following is a brief of what took place at the event:

Technological Experiences

One of the experiences presented at the stand was Globant Minds, an interactive application which main objective is to teach Globant’s capabilities in the field of artificial vision.

Globant Minds presented attendees multiple AI applications in images such as face recognition, gesture classification, and style transfer.

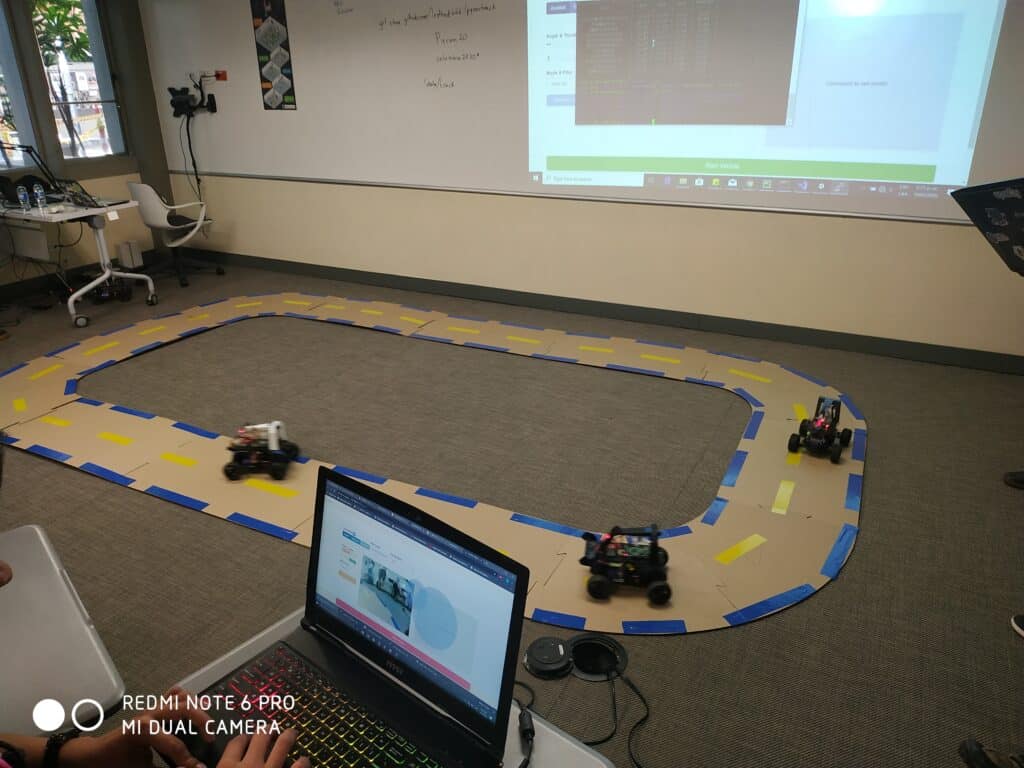

In the field of autonomous driving, Globant showed a functional model of a scale of an autonomous car.

The vehicle known as Donkey Car the was in full display at the Globant booth.

The speakers show to the attendees the features of the development, testing and implementation of the software in a controlled environment.

Finally, as part of the digital experiences, Globant presented Augmented Coding, one of the internal initiatives focused on increasing the capabilities of Globers through AI.

Augmented Coding, in particular, seeks to facilitate the software development process through tools such as automatic documentation and semantic code search.

For more information about the potential of these technologies to transform organizations, you can visit Augmented Globant.

The Workshops

In one of the workshops, they considered Data Processing and Analysis with Apache Spark. There, the participants were able to familiarize themselves with the use of this framework and its diverse set of APIs. They can be used from Python, to work with large volumes of data, make findings and calculate metrics.

The workshop began with a brief explanation of the MapReduce and Hadoop paradigm, then participants were able to know Spark importance, its advantages, and architecture since it’s used in the world of Big Data.

They show the in-depth the use of Spark to process and make various queries on real data sets (specially prepared for the workshop).

In the Facial Recognition workshop, participants were able to explore this area. Assistants built a pipeline that ranges from user registration to authentication.

They considered a customized machine learning model, with on-premise implementation. They also considered a cloud prototype through the models available on Amazon AWS and Microsoft Azure.

If you want to know a little more about our way of work you can visit us here.